About

How's it going?! I'm Salvador, a Master's student in Computer Science at The University of Texas at Austin. I've interned twice as a Software Engineer at Google and once as a Machine Learning Engineer at Uber, and I enjoy doing research! So far I've published six peer-reviewed papers as a first author (going from Theoretical ML to Fairness and Responsible AI). I hold dual bachelor's degrees in Computer Science and Mathematics from The University of Texas at El Paso with a concentration in Statistics and Data Analytics.

Research interests: Multimodal AI (Vision + Text), Natural Language Processing, Agentic AI, and Responsible AI (e.g. Alignment).

Hobbies: Soccer, chess, weightlifting, reading Dostoevsky (favorite: The Brothers Karamazov), and sustainable gardening.

Publications

Google Scholar →Projects

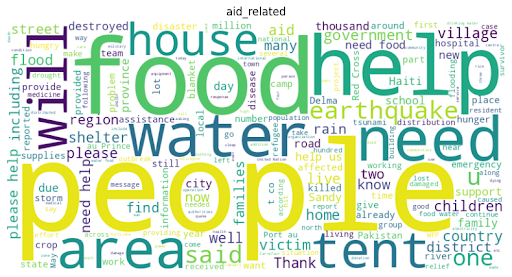

Disaster Response Message Classification

Built an NLP pipeline for disaster response message classification using classical ML and transformer methods. Trained vectorization models to predict urgent needs (water, food, shelter, aid) from crisis messages. Created a RAG pipeline using FAISS-backed retrieval and a FastAPI app for semantic search over 26k+ disaster reports.

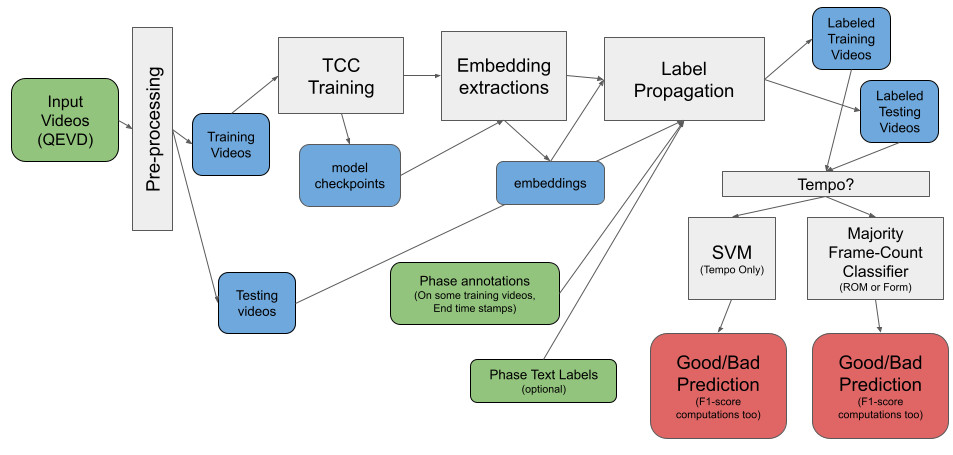

Towards Maximizing Muscle Growth in Motion

Built a video exercise evaluation system to classify rep quality across tempo, form, and range of motion (ROM). Compared multiple approaches, including a VLM (InternVideo2), pose-based analysis, and temporal alignment (TCC), highlighting tradeoffs in general video understanding, with a focus on tempo detection for hypertrophy training.

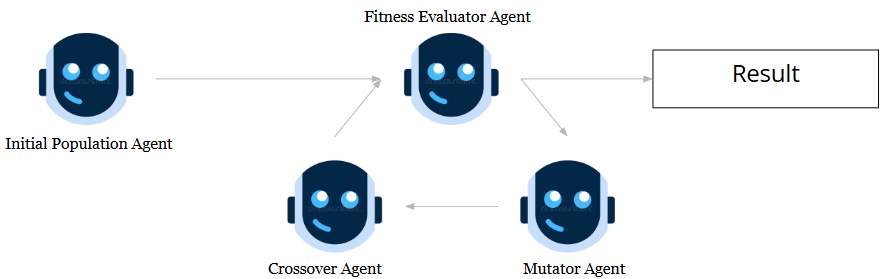

Evolutionary Optimization with Multi-Agent System

Built an evolutionary-inspired multi-agent LLM system where agents act as population generator, mutator, crossover, and fitness evaluator. Used LLM-based evaluation with few-shot prompting and showed consistent performance gains over simpler agent baselines in optimization tasks.

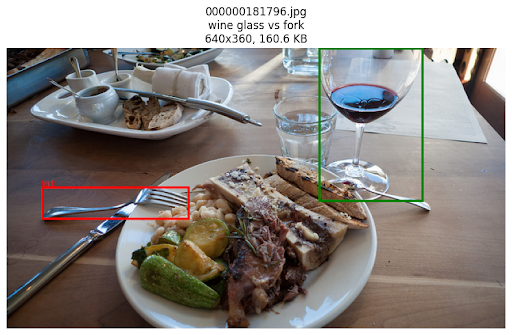

Benchmarking Spatial Reasoning in Vision-Language Models

Benchmarked spatial reasoning and grounding ability in vision-language models, focusing on left–right consistency and size perception. Evaluated SOTA VLMs across 5k+ COCO images and 700+ synthetic scenes to measure robustness on spatial relationship tasks.

Multimodal Expert Sports Coaching System

Developing a multimodal AI sports coach that generates expert-style feedback from exercise videos using vision-language models. Built evaluation pipelines aligned with human coaching judgments using 30k+ coach-commentary samples and large-scale video data.